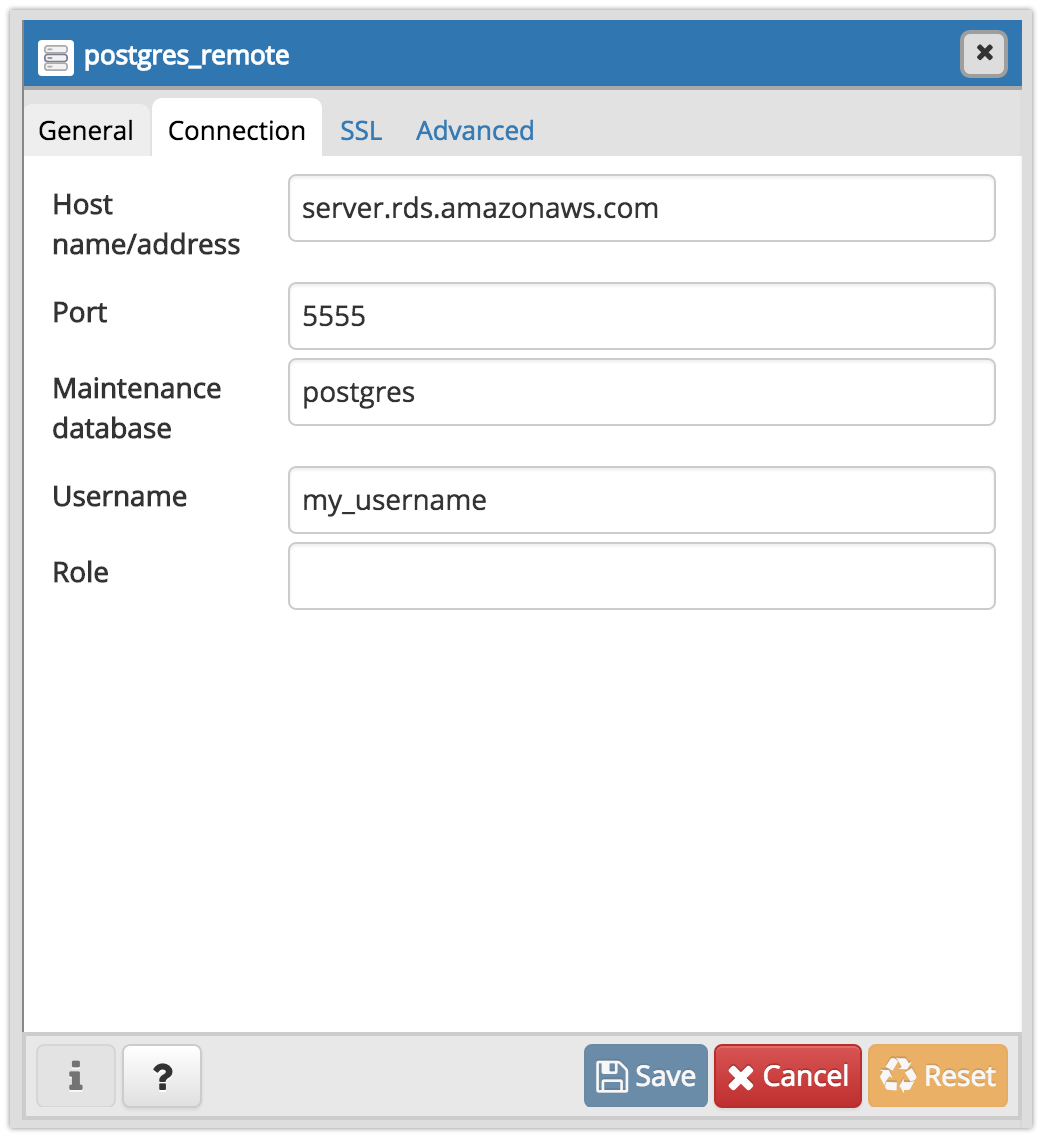

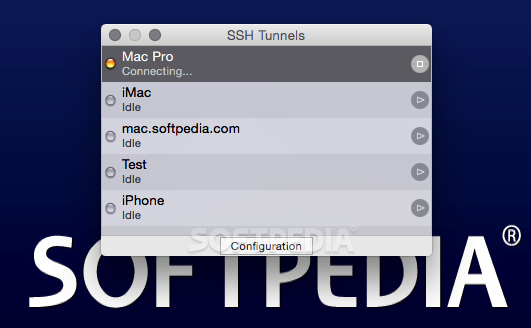

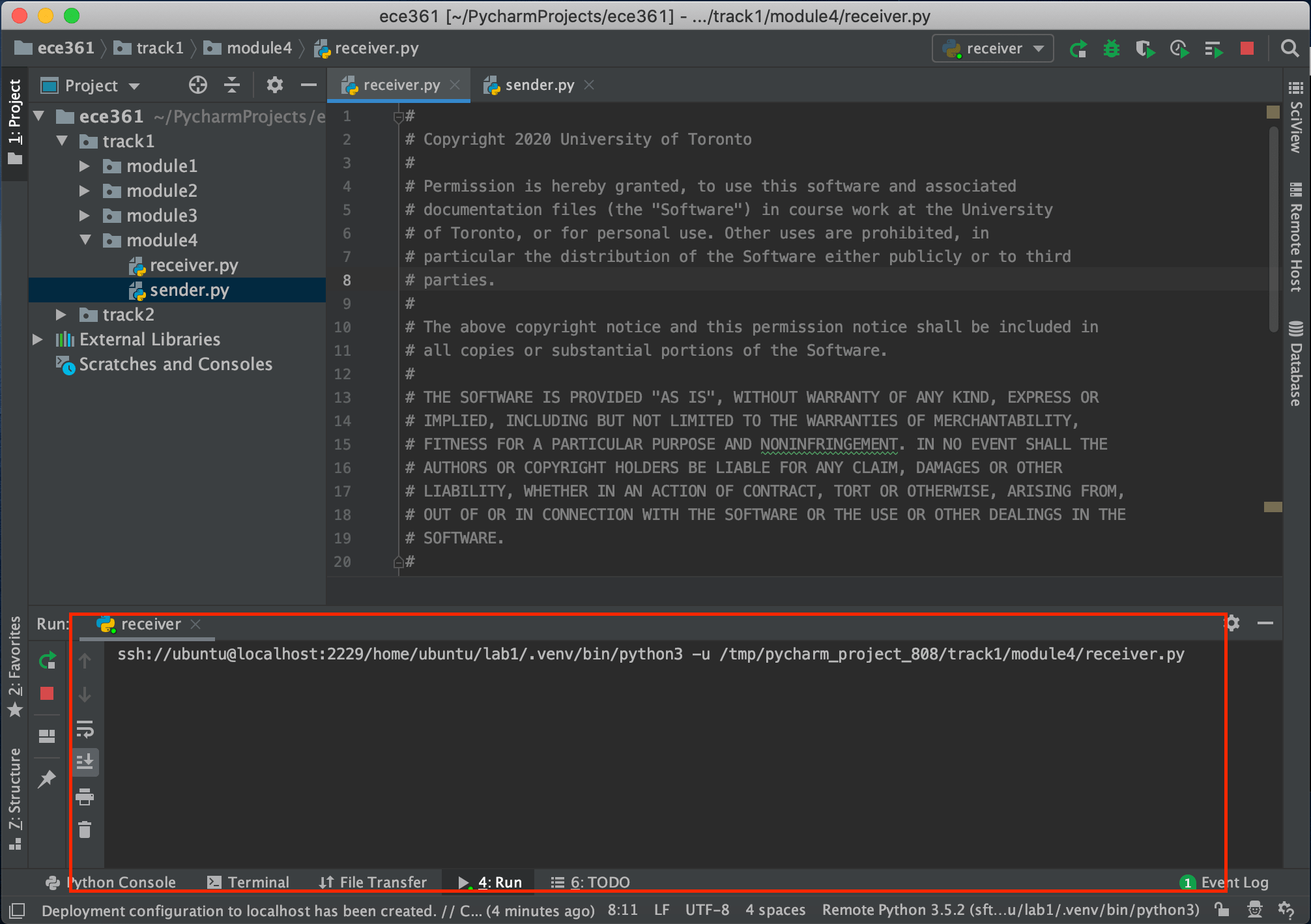

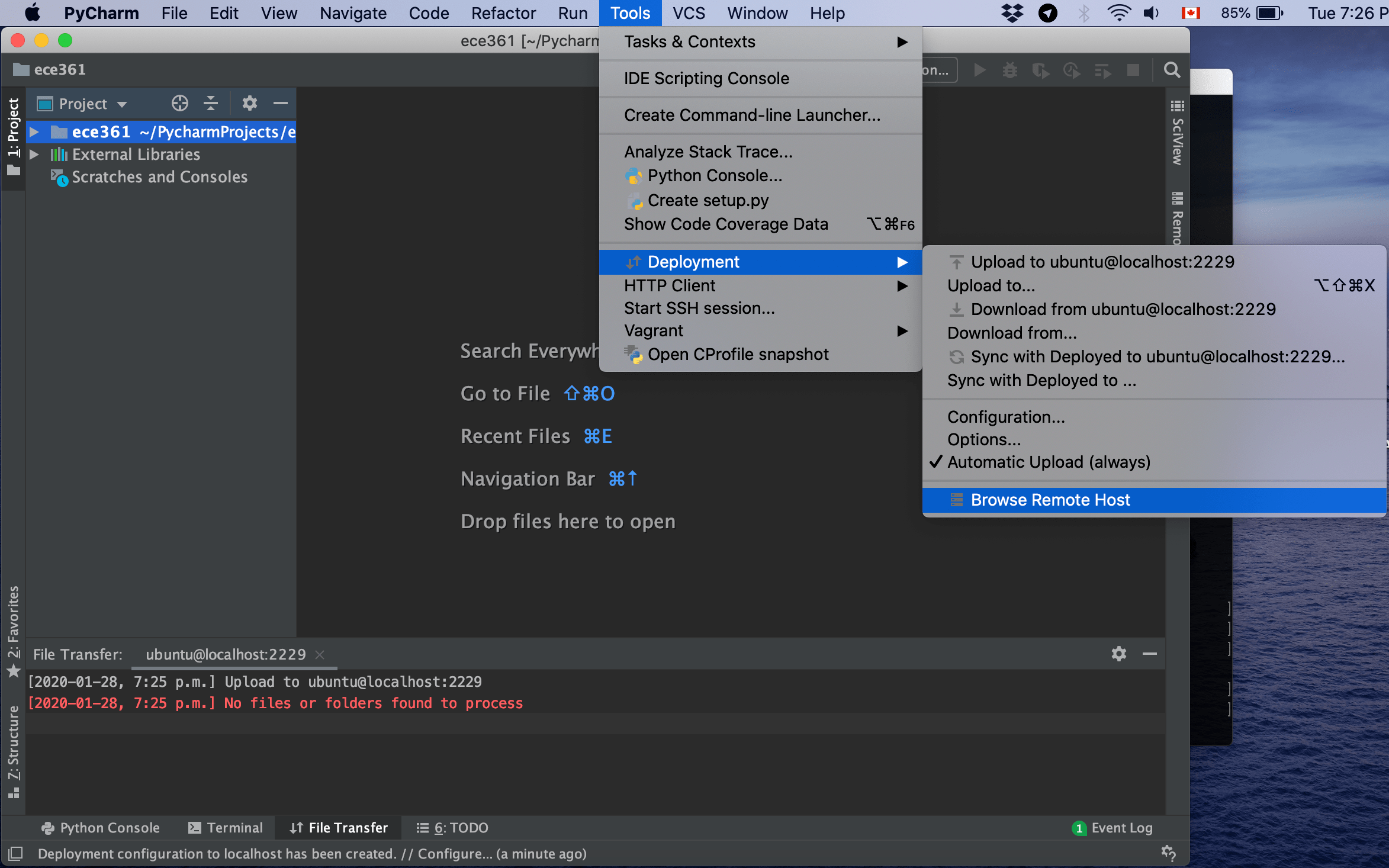

So, it doesnt work with these deployment servers. P圜harm uses by default its own ssh client when using SFTP deploy. : 2003: Can't connect to MySQL server on '127.0.0. P圜harm SSH tunneling via local ssh config (/.ssh/config) I use ssh deployment on servers via ssh tunnels, and each of its has specific options and port forwarding placed in /.ssh/config. Then I try to connect through the MySQL-connector for Python as follow :Ĭnx=(user='yoann_builder', password='pass',host="127.0.0.1", port=3307) I use SSHTunnel () to setup the SSH-Tunnel as follow : Use the credentials that you’ve set up for your Raspberry Pi. Then use the gear icon to add an SSH remote interpreter. Go to File Create New Project, and choose Pure Python (we’ll add Flask later, so you could choose Flask here as well if you’d prefer). It conforms to the Python DB API 2.

It is a Thrift-based client with no dependencies on ODBC or JDBC. Or to a Databricks Runtime interactive cluster (e.g.I am using windows 7 with Python 3.4 (with Pycharm) and try to acces a remote mySQL-database through SSH with a private key, just like it is working with MySQL-Workbench in the following picture : Let’s connect P圜harm to the Raspberry Pi. The Databricks SQL Connector for Python allows you to develop Python applications that connect to Databricks clusters and SQL warehouses. Local code editors such as VS Code or P圜harm to make the most of coding on Colab. http-path is the HTTP Path either to a Databricks SQL endpoint (e.g. ssh/authorizedkeys) or add it as a deploy key if you are.server-hostname is the Databricks instance host name.For now, I need to copy my project to the host2 and run some code on that machine (to use all the. For the first time, I used P圜harm remote interpreter via SSH connection to host1, and it worked really cool: remote deployment, running all scripts on host1 and etc. When setting up local forwarding, enter the local forwarding port in the Source Port field and in Destination enter the destination host and IP, for example, localhost:5901. fetchall () for row in result : print ( row ) cursor. I have two remote hosts and my local computer: let it be host1 and host2. Under the Connection menu, expand SSH and select Tunnels.Check the Local radio button to setup local, Remote for remote, and Dynamic for dynamic port forwarding. As a workaround, you can set up the SSH tunnel from your terminal and then configure Sequel Ace to connect over localhost. execute ( 'SELECT * FROM RANGE(10)' ) result = cursor. connect ( server_hostname = host, http_path = http_path, access_token = access_token ) cursor = connection.

getenv ( "DATABRICKS_ACCESS_TOKEN" ) connection = sql. Delete /.pycharmhelpers from remote host, and kill all opened SSH sessions that may still run in the background or reboot the remote host and try again. getenv ( "DATABRICKS_HTTP_PATH" ) access_token = os. getenv ( "DATABRICKS_HOST" ) http_path = os. Specify the IP address of the SSH server and the port on the remote host to forward the connection: 192.168.31.90:3389. Note: Don't hard-code authentication secrets into your Python. It launches JetBrains Client, which is a thin client that enables you to work with your remote project. 21-23 reverse SSH tunnel example, 34 rforward demo, 32 RTLMoveMemory. Install the library with pip install databricks-sql-connector JetBrains Gateway is used as an entry point to connect to a remote server via SSH. 3738 proxyhandler function ( TCP Proxy ), 22 P圜harm IDE, 5 pycryptodome. feature in the MobaSSHTunnel list and in the sessions SSH jump hosts list. An alternative might be to connect through some sort of tunnel (e.g. You can also contact Databricks Support here. Free X server for Windows with tabbed SSH terminal, telnet, RDP, VNC and. This means that for now, you will have to allow accessing the database from many IPs. You are welcome to file an issue here for general use cases. Arrow tables are wrapped in the ArrowQueue class to provide a natural API to get several rows at a time. This connector uses Arrow as the data-exchange format, and supports APIs to directly fetch Arrow tables. It conforms to the Python DB API 2.0 specification and exposes a SQLAlchemy dialect for use with tools like pandas and alembic which use SQLAlchemy to execute DDL. The Databricks SQL Connector for Python allows you to develop Python applications that connect to Databricks clusters and SQL warehouses.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed